PC processor what to make of it. What you need to know about the computer's central processing unit

In fact, what we call a processor today is properly called a microprocessor. There is a difference and is determined by the type of device and its historical development.

The first processor (Intel 4004) appeared in 1971 year.

Outwardly, it is a silicon plate with millions and billions (today) of transistors and channels for passing signals.

The purpose of the processor is automatic execution programs. In other words, it is the main component of any computer.

Processor device

The key components of a processor are arithmetic logic unit(ALU), registers and control device. ALUs will perform basic mathematical and logical operations. All calculations are made in the binary system. The coherence of the operation of the parts of the processor itself and its connection with other (external to it) devices depend on the control device. The registers temporarily store the current command, initial, intermediate and final data (the result of ALU calculations). The capacity of all registers is the same.

Data and instruction cache stores frequently used data and commands. Cache access is much faster than RAM access, so the larger it is, the better.

Processor scheme

Processor operation

The processor runs under the control of a program located in RAM.

(The work of the processor is more complicated than it is shown in the diagram above. For example, data and commands enter the cache not immediately from RAM, but through a prefetch block, which is not shown in the diagram. Also, the decoding block that converts data and commands into binary form, only after which the processor can work with them.)

The control block, among other things, is responsible for calling the next command and determining its type.

The arithmetic logic unit, having received the data and the command, performs the specified operation and writes the result to one of the free registers.

The current team is in a specially designated instruction register. In the process of working with current team the importance of the so-called program counter, which now points to the next command (unless, of course, there was a jump or stop command).

Often a command is presented as a structure consisting of a record of the operation (to be performed) and the addresses of the cells of the source data and the result. Data is taken from the addresses specified in the command and placed in ordinary registers (in the sense not in the command register), the resulting result also first appears in the register, and only then is moved to its address specified in the command.

Processor Specifications

Clock frequency CPU today is measured in gigahertz (GHz), previously measured in megahertz (MHz). 1MHz = 1 million cycles per second.

The processor communicates with other devices ( RAM) via data, address and control buses. Bus width is always a multiple of 8 (it is clear why, if we are dealing with bytes), changeable in the course of historical development computer technology and different for different models, and is also not the same for the data bus and the address bus.

Data bus width tells how much information (how many bytes) can be transferred at a time (per clock). From address bus width depends on the maximum amount of RAM with which the processor can work at all.

The power (performance) of the processor is affected not only by its clock frequency and data bus width, but also importance has a cache memory.

The modern consumer of electronics is very difficult to surprise. We are already accustomed to the fact that our pocket is legally occupied by a smartphone, a laptop is in a bag, a “smart” watch obediently counts steps on the hand, and earphones caress active system noise reduction.

It's a funny thing, but we are used to carrying not one, but two, three or more computers at once. After all, this is how you can call a device that has CPU. And it doesn’t matter what a particular device looks like. A miniature chip is responsible for its work, having overcome a turbulent and rapid path of development.

Why did we bring up the topic of processors? Everything is simple. Over the past ten years, there has been a real revolution in the world mobile devices.

There are only 10 years difference between these devices. But Nokia N95 then seemed to us a space device, and today we look at ARKit with a certain mistrust

But everything could have turned out differently, and the battered Pentium IV would have remained the ultimate dream of an ordinary buyer.

We tried to do without complicated technical terms and tell how the processor works and find out which architecture is the future.

1. How it all started

The first processors were completely different from what you can see when you open the lid. system block your PC.

Instead of microcircuits in the 40s of the XX century, electromechanical relays supplemented with vacuum tubes. The lamps acted as a diode, the state of which could be regulated by lowering or increasing the voltage in the circuit. The structures looked like this:

For the operation of one gigantic computer, hundreds, sometimes thousands of processors were needed. But, at the same time, you would not be able to run even a simple editor like NotePad or TextEdit from the standard set of Windows and macOS on such a computer. The computer would simply not have enough power.

2. The advent of transistors

First FETs appeared in 1928. But the world changed only after the appearance of the so-called bipolar transistors opened in 1947.

In the late 1940s, experimental physicist Walter Brattain and theorist John Bardeen developed the first point transistor. In 1950, it was replaced by the first junction transistor, and in 1954, the well-known manufacturer Texas Instruments announced a silicon transistor.

But the real revolution came in 1959, when the scientist Jean Henri developed the first silicon planar (flat) transistor, which became the basis for monolithic integrated circuits.

Yes, it's a bit tricky, so let's dig a little deeper and deal with the theoretical part.

3. How a transistor works

So, the task of such an electrical component as transistor is to control the current. Simply put, this little tricky switch controls the flow of electricity.

The main advantage of the transistor over conventional switch in that it does not require the presence of a person. Those. such an element is capable of independently controlling the current. In addition, it works much faster than you would turn on or off the electrical circuit yourself.

From a school computer science course, you probably remember that a computer “understands” human language through combinations of only two states: “on” and “off”. In the understanding of the machine, this is the state "0" or "1".

The task of the computer is to represent electricity in the form of numbers.

And if earlier the task of switching states was performed by clumsy, bulky and inefficient electrical relays, now the transistor has taken over this routine work.

From the beginning of the 60s, transistors began to be made from silicon, which made it possible not only to make processors more compact, but also to significantly increase their reliability.

But first, let's deal with the diode

Silicon(aka Si - "silicium" in the periodic table) belongs to the category of semiconductors, which means that, on the one hand, it transmits current better than a dielectric, on the other hand, it does it worse than a metal.

Whether we like it or not, but to understand the work and the further history of the development of processors, we will have to plunge into the structure of one silicon atom. Don't be afraid, let's make it short and very clear.

The task of the transistor is to amplify a weak signal due to an additional power source.

The silicon atom has four electrons, thanks to which it forms bonds (and to be precise - covalent bonds) with the same nearby three atoms, forming a crystal lattice. While most of the electrons are in bond, a small part of them is able to move through the crystal lattice. It is because of this partial transfer of electrons that silicon was classified as a semiconductor.

But such a weak movement of electrons would not allow the use of a transistor in practice, so scientists decided to increase the performance of transistors by doping, or more simply, additions to the crystal lattice of silicon by atoms of elements with a characteristic arrangement of electrons.

So they began to use a 5-valent impurity of phosphorus, due to which they received n-type transistors. The presence of an additional electron made it possible to accelerate their movement, increasing the current flow.

When doping transistors p-type boron, which contains three electrons, became such a catalyst. Due to the absence of one electron, holes appear in the crystal lattice (they play the role of a positive charge), but due to the fact that electrons are able to fill these holes, the conductivity of silicon increases significantly.

Suppose we took a silicon wafer and doped one part of it with a p-type impurity, and the other with an n-type impurity. So we got diode – base element transistor.

Now the electrons located in the n-part will tend to go to the holes located in the p-part. In this case, the n-side will have a slight negative charge, and the p-side will have a positive charge. The electric field formed as a result of this "gravity" - the barrier - will prevent the further movement of electrons.

If you connect a power source to the diode in such a way that "-" touches the p-side of the plate, and "+" touches the n-side, current flow will not be possible due to the fact that the holes will be attracted to the negative contact of the power source, and the electrons to positive, and the bond between the p and n electrons will be lost due to the expansion of the combined layer.

But if you connect the power supply with sufficient voltage the other way around, i.e. "+" from the source to the p-side, and "-" to the n-side, electrons placed on the n-side will be repelled by the negative pole and pushed to the p-side, occupying holes in the p-region.

But now the electrons are attracted to the positive pole of the power source and they continue to move through the p-holes. This phenomenon has been called forward biased diode.

diode + diode = transistor

By itself, the transistor can be thought of as two diodes docked to each other. In this case, the p-region (the one where the holes are located) becomes common for them and is called the “base”.

At N-P-N transistor two n-regions with additional electrons - they are also the "emitter" and "collector" and one, weak region with holes - the p-region, called the "base".

If you connect a power supply (let's call it V1) to the n-regions of the transistor (regardless of the pole), one diode will be reverse-biased and the transistor will be v closed state .

But, as soon as we connect another power source (let's call it V2), setting the "+" contact to the "central" p-region (base), and the "-" contact to the n-region (emitter), some of the electrons will flow through again formed chain (V2), and the part will be attracted by the positive n-region. As a result, electrons will flow into the collector region, and a weak electric current will be amplified.

Exhale!

4. So how does a computer actually work?

And now the most important thing.

Depending on the applied voltage, the transistor can be either open, or closed. If the voltage is insufficient to overcome the potential barrier (the one at the junction of p and n plates) - the transistor will be in the closed state - in the “off” state or, in the language binary system – "0".

With enough voltage, the transistor turns on, and we get the value "on" or "1" in binary.

This state, 0 or 1, is called a "bit" in the computer industry.

Those. we get the main property of the very switch that opened the way to computers for mankind!

In the first electronic digital computer ENIAC, or, more simply, the first computer, about 18 thousand triode lamps were used. The size of the computer was comparable to a tennis court, and its weight was 30 tons.

To understand how the processor works, there are two more key points to understand.

Moment 1. So, we have decided what is bit. But with its help, we can only get two characteristics of something: either "yes" or "no". In order for the computer to learn to understand us better, they came up with a combination of 8 bits (0 or 1), which they called byte.

Using a byte, you can encode a number from zero to 255. Using these 255 numbers - combinations of zeros and ones, you can encode anything.

Moment 2. The presence of numbers and letters without any logic would not give us anything. That is why the concept logical operators.

By connecting just two transistors in a certain way, you can achieve several logical actions at once: “and”, “or”. The combination of the amount of voltage on each transistor and the type of their connection allows you to get different combinations of zeros and ones.

Through the efforts of programmers, the values \u200b\u200bof zeros and ones, the binary system, began to be translated into decimal so that we could understand what exactly the computer “says”. And to enter commands, our usual actions, such as entering letters from the keyboard, are represented as a binary chain of commands.

Simply put, imagine that there is a correspondence table, say, ASCII, in which each letter corresponds to a combination of 0 and 1. You pressed a button on the keyboard, and at that moment on the processor, thanks to the program, the transistors switched in such a way that the following appeared on the screen: the most written letter on the key.

This is a rather primitive explanation of how the processor and the computer work, but it is this understanding that allows us to move on.

5. And the transistor race began

After the British radio engineer Geoffrey Dahmer proposed in 1952 to place the simplest electronic components in a monolithic semiconductor crystal, computer industry took a leap forward.

From the integrated circuits proposed by Dahmer, engineers quickly switched to microchips based on transistors. In turn, several such chips have already formed themselves CPU.

Of course, the dimensions of such processors are not much similar to modern ones. In addition, until 1964, all processors had one problem. They required an individual approach - their own programming language for each processor.

- 1964 IBM System/360. Universal compatible computer program code. An instruction set for one processor model could be used for another.

- 70s. The appearance of the first microprocessors. Single chip processor from Intel. Intel 4004 - 10 µm TPU, 2300 transistors, 740 kHz.

- 1973 Intel 4040 and Intel 8008. 3,000 transistors, 740 kHz for the Intel 4040 and 3,500 transistors at 500 kHz for the Intel 8008.

- 1974 Intel 8080. 6 micron TPU and 6000 transistors. The clock frequency is about 5,000 kHz. It was this processor that was used in the Altair-8800 computer. The domestic copy of the Intel 8080 is the KR580VM80A processor, developed by the Kiev Research Institute of Microdevices. 8 bits

- 1976 Intel 8080. 3 micron TPU and 6500 transistors. Clock frequency 6 MHz. 8 bits

- 1976 Zilog Z80. 3 micron TPU and 8500 transistors. Clock frequency up to 8 MHz. 8 bits

- 1978 Intel 8086. 3 micron TPU and 29,000 transistors. The clock frequency is about 25 MHz. The x86 instruction set that is still in use today. 16 bits

- 1980 Intel 80186. 3 micron TPU and 134,000 transistors. Clock frequency - up to 25 MHz. 16 bits

- 1982 Intel 80286. 1.5 micron TPU and 134,000 transistors. Frequency - up to 12.5 MHz. 16 bits

- 1982 Motorola 68000. 3 µm and 84,000 transistors. This processor was used in Apple computer Lisa.

- 1985 Intel 80386. 1.5 micron tp and 275,000 transistors. Frequency - up to 33 MHz in the 386SX version.

It would seem that the list could be continued indefinitely, but then Intel engineers faced a serious problem.

6. Moore's Law or how chipmakers live on

Out in the late 80s. Back in the early 60s, one of the founders of Intel, Gordon Moore, formulated the so-called "Moore's Law". It sounds like this:

Every 24 months, the number of transistors on an integrated circuit chip doubles.

It is difficult to call this law a law. It would be more accurate to call it empirical observation. Comparing the pace of technology development, Moore concluded that a similar trend could form.

But already during the development of the fourth generation Intel processors i486 engineers are faced with the fact that they have already reached the performance ceiling and can no longer accommodate large quantity processors in the same area. At that time, technology did not allow this.

As a solution, a variant was found using a number of additional elements:

- cache memory;

- conveyor;

- built-in coprocessor;

- multiplier.

Part of the computational load fell on the shoulders of these four nodes. As a result, the appearance of cache memory, on the one hand, complicated the design of the processor, on the other hand, it became much more powerful.

The Intel i486 processor already consisted of 1.2 million transistors, and the maximum frequency of its operation reached 50 MHz.

In 1995, AMD joined the development and released the fastest i486-compatible Am5x86 processor at that time on a 32-bit architecture. It was already manufactured according to the 350 nanometer process technology, and the number of installed processors reached 1.6 million pieces. The clock frequency has increased to 133 MHz.

But the chipmakers did not dare to pursue further increasing the number of processors installed on a chip and developing the already utopian CISC (Complex Instruction Set Computing) architecture. Instead, the American engineer David Patterson proposed to optimize the operation of processors, leaving only the most necessary computational instructions.

So processor manufacturers switched to the RISC (Reduced Instruction Set Computing) platform. But even this was not enough.

In 1991, the 64-bit R4000 processor was released, operating at a frequency of 100 MHz. Three years later, the R8000 processor appears, and two years later, the R10000 with clock speeds up to 195 MHz. In parallel, the market for SPARC processors developed, the architecture feature of which was the absence of multiplication and division instructions.

Instead of fighting over the number of transistors, chip manufacturers began to rethink the architecture of their work.. The rejection of "unnecessary" commands, the execution of instructions in one cycle, the presence of registers of general value and pipelining made it possible to quickly increase the clock frequency and power of processors without distorting the number of transistors.

Here are just a few of the architectures that appeared between 1980 and 1995:

- SPARC;

- ARM;

- PowerPC;

- Intel P5;

- AMD K5;

- Intel P6.

They were based on the RISC platform, and in some cases, a partial, combined use of the CISC platform. But the development of technology once again pushed chipmakers to continue building up processors.

In August 1999, the AMD K7 Athlon entered the market, manufactured using a 250 nm process technology and including 22 million transistors. Later, the bar was raised to 38 million processors. Then up to 250 million.

The technological processor increased, the clock frequency increased. But, as physics says, there is a limit to everything.

7. The end of the transistor competition is near

In 2007, Gordon Moore made a very blunt statement:

Moore's Law will soon cease to apply. It is impossible to install an unlimited number of processors indefinitely. The reason for this is the atomic nature of matter.

It is noticeable to the naked eye that the two leading chip manufacturers AMD and Intel have clearly slowed down the pace of processor development over the past few years. The accuracy of the technological process has increased to only a few nanometers, but it is impossible to place even more processors.

And while semiconductor manufacturers threaten to launch multilayer transistors, drawing a parallel with 3DNand memory, a serious competitor appeared at the walled x86 architecture 30 years ago.

8. What awaits "regular" processors

Moore's Law has been invalidated since 2016. This was officially announced by the largest processor manufacturer Intel. Doubling computing power by 100% every two years is no longer possible for chipmakers.

And now processor manufacturers have several unpromising options.

The first option is quantum computers. There have already been attempts to build a computer that uses particles to represent information. There are several similar quantum devices in the world, but they can only cope with algorithms of low complexity.

In addition, the serial launch of such devices in the coming decades is out of the question. Expensive, inefficient and… slow!

Yes, quantum computers consume much less power than their modern counterparts, but they will also be slower until developers and component manufacturers switch to new technology.

The second option - processors with layers of transistors. Both Intel and AMD have seriously thought about this technology. Instead of one layer of transistors, they plan to use several. It seems that in the coming years, processors may well appear in which not only the number of cores and clock frequency will be important, but also the number of transistor layers.

The solution has the right to life, and thus the monopolists will be able to milk the consumer for another couple of decades, but, in the end, the technology will again hit the ceiling.

Today, realizing the rapid development of the ARM architecture, Intel made a quiet announcement of the Ice Lake family of chips. Processors will be manufactured at 10nm technological process and will become the basis for smartphones, tablets and mobile devices. But it will happen in 2019.

9. ARM is the future

So, the x86 architecture appeared in 1978 and belongs to the type of CISC platform. Those. by itself, it implies the existence of instructions for all occasions. Versatility is the main strong point of the x86.

But, at the same time, versatility played a cruel joke with these processors. x86 has several key disadvantages:

- the complexity of commands and their frank confusion;

- high energy consumption and heat release.

For high performance, I had to say goodbye to energy efficiency. Moreover, two companies are currently working on the x86 architecture, which can be safely attributed to monopolists. These are Intel and AMD. Only they can produce x86 processors, which means that only they rule the development of technologies.

At the same time, several companies are involved in the development of ARM (Arcon Risk Machine). Back in 1985, developers chose the RISC platform as the basis for further development of the architecture.

Unlike CISC, RISC involves designing a processor with the minimum required number of instructions, but maximum optimization. RISC processors are much smaller than CISC, more power efficient and simpler.

Moreover, ARM was originally created solely as a competitor to x86. The developers set the task to build an architecture that is more efficient than x86.

Since the 1940s, engineers have understood that one of the priority tasks is to reduce the size of computers, and, first of all, the processors themselves. But almost 80 years ago, hardly anyone could have imagined that a full-fledged computer would be smaller than a matchbox.

The ARM architecture was once supported by Apple, which launched the production of Newton tablets based on the ARM6 family of ARM processors.

Sales of desktop computers are falling rapidly, while the number of mobile devices sold annually is already in the billions. Often, in addition to performance, when choosing an electronic gadget, the user is interested in several more criteria:

- mobility;

- autonomy.

The x86 architecture is strong in terms of performance, but if you forego active cooling, the powerful processor will seem pathetic compared to the ARM architecture.

10. Why ARM is the undisputed leader

You will hardly be surprised that your smartphone, whether it's a simple Android or Apple's 2016 flagship, is dozens of times more powerful than full-fledged computers from the late 90s.

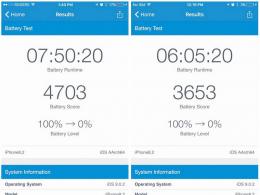

But how much more powerful is the same iPhone?

In itself, comparing two different architectures is a very difficult thing. Measurements here can only be performed approximately, but you can understand the enormous advantage that smartphone processors built on the ARM architecture provide.

A universal assistant in this matter is the artificial Geekbench performance test. The utility is available as stationary computers as well as on Android and iOS platforms.

Medium and elementary grade laptops clearly lags behind the performance of the iPhone 7. In the top segment, everything is a little more complicated, but in 2017 year of Apple launches iPhone X with the new A11 Bionic chip.

There, the ARM architecture is already familiar to you, but the figures in Geekbench have almost doubled. Laptops from the "higher echelon" tensed up.

And it's only been one year.

The development of ARM is in leaps and bounds. While Intel and AMD show 5-10% performance gains year after year, over the same period, smartphone manufacturers manage to increase processor power by two to two and a half times.

Skeptical users who go through the top lines of Geekbench just want to be reminded: in mobile technologies size is what matters most.

Place a candy bar with a powerful 18-core processor that “rips the ARM architecture to shreds” on the table, and then put your iPhone next to it. Feel the difference?

11. Instead of output

It is impossible to cover the 80-year history of the development of computers in one material. But after reading this article, you will be able to understand how the main element of any computer is arranged - the processor, and what to expect from the market in the coming years.

Of course, Intel and AMD will work on further increasing the number of transistors on a single chip and promoting the idea of multilayer elements.

But do you, as a customer, need such power?

It is unlikely that you are not satisfied iPad performance Pro or the flagship iPhone X. I don't think you're unhappy with the performance of your multicooker in your kitchen or the picture quality on a 65-inch 4K TV. But all these devices use processors on the ARM architecture.

Windows has already officially announced that it is looking towards ARM with interest. The company included support for this architecture back in Windows 8.1, and is now actively working on a tandem with the leading ARM chipmaker Qualcomm.

Hello dear readers. Today we will show you what the processor consists of from the inside. Many users, of course, have had experience with installing a processor on motherboard, but not many people know how it looks from the inside. We will try to explain to you enough plain language so that it is clear, but at the same time without omitting the details. Before you start talking about constituent parts processor, you can get acquainted with a very curious Russian prototype Elbrus.

Many users believe that the processor looks exactly as shown in the picture.

However, this is the entire assembly, which consists of smaller and more vital parts. Let's see what the processor consists of from the inside. The processor includes:

In the figure above, number 1 shows a protective cover that provides mechanical protection against dust and other small particles. The cover is made of a material that has a high coefficient of thermal conductivity, which allows you to take excess heat from the crystal, thereby ensuring a normal temperature range for the processor.

Number 2 shows the "brain" of the processor and the computer as a whole - this is a crystal. It is he who is considered the most "smart" element of the processor, which performs all the tasks assigned to it. You can see that a microcircuit is applied to the crystal in a thin layer, which provides the specified functioning of the processor. Most often, processor crystals are made of silicon: this is due to the fact that this element has rather complex molecular bonds that are used in the formation of internal currents, which ensures the creation of multi-threaded information processing.

At number 3, a textolite platform is shown, to which all the rest are attached: a crystal and a lid. This platform also plays the role of a good conductor, which provides good electrical contact with the crystal. On the reverse side platforms in order to increase electrical conductivity there are many points made of precious metal (sometimes even gold is used).

Here's what the conductive dots look like on an Intel processor.

The shape of the contacts depends on which socket is on the motherboard. It also happens that instead of the dots on the back of the platform you can see pins that perform the same role. As a rule, for processors of the Intel family, the pins are located in the motherboard itself. In this case, dots will be located on the substrate (aka platform). For family AMD processors pins are located directly on the substrate itself. Such processors look like this.

Now consider the method of fastening all the details. In order for the cover to be firmly held on the substrate, it is “sit down” with a special adhesive-sealant, which is resistant to high temperatures. This allows the structure to be in a permanent bond without violating its integrity.

In order to prevent the crystal from overheating, a special gasket 1 is applied to it, on top of which, in turn, thermal paste 2 is applied, which ensures efficient heat removal to the cover. The cover is also “lubricated” from the inside with thermal paste.

Let's now see what a dual-core processor looks like. The core is a separate functionally independent crystal, which is installed in parallel on a substrate. It looks like this.

Thus, 2 cores installed side by side increase the total processor power. However, if you see 2 dies side by side, this does not always mean that you have a dual-core processor. On some sockets, 2 crystals are installed, one of which is responsible for the arithmetic-logical part, and the other for graphics processing (a kind of built-in GPU). This helps in cases where you have a built-in video card, the power of which is not enough to cope, for example, with some game. In quiet cases, the lion's share of the calculations is taken over by the graphics part of the central processor. This is what the processor with the graphics core looks like.

So, friends, we figured out what the processor consists of. Now it has become clear that all the devices that make up the processor play an important and indispensable role for high-quality work. Do not forget to comment on the articles of our site, subscribe to our newsletter and learn a lot of interesting things. Your opinion is important to us!

| Article authors: Gvindzhilia Grigory and Pashchenko Sergey |

While Intel alternately introduces new technologies along with small changes, AMD makes big steps in production at regular intervals. The above photo shows the models of the mentioned companies with a distinctive

While Intel alternately introduces new technologies along with small changes, AMD makes big steps in production at regular intervals. The above photo shows the models of the mentioned companies with a distinctive